How will cobots integrate into the post-COVID workplace?

Ian Ferguson, vice president, marketing and strategic alliances at Lynx Software Technologies, looks at the role of cobots after the pandemic.

Factories are increasingly being challenged to be nimble and adept. Instead of creating hundreds, thousands or even millions of the same thing, the new paradigm is flexibility allowing swift reconfiguration of equipment to support different builds.

The emergence of Cobots, or collaborative robots intended to interact with humans in a shared space or to work safely in close proximity is an important development

Cobots stand in stark contrast to traditional industrial robots which work autonomously with safety assured by isolation from human contact. This is currently a very small percentage of the overall robotics market, but is an area we (and a number of recognised analysts) believe will grow rapidly in the next five years, for applications in manufacturing, healthcare and retail.

As Cobots are placed in the vicinity of humans, this is a segment where the equipment must work all the time in a deterministic way. The safety of the human working alongside the Cobot is of paramount importance.

Safety certifications and processes need to be defined and strictly observed if this market is to form successfully. This is an area where there is a lot of focus on AI to improve the user experience with these types of machines.

The desire to keep these platforms viable for a long period of time means that there needs to be a secure way to deliver software updates that enable new capabilities to these platforms.

For some of the larger platforms, we also see moves towards greater modularity, enabling hardware upgrades over time. The manufacturers of hardware do not want to be tied into a specific hardware vendor, especially in an area as dynamic as artificial intelligence where there will be many events such as acquisitions, companies ceasing to trade, and changes to performance leadership rankings in the coming few years.

This issue is compounded by the fact that Cobots necessarily will need to be connected. Not only is there a need to share sensor data and receive instructions, but there is also a need for security and other updates.

We need to consider, then, that a security incursion may impact not just the system itself, but potentially any devices accessible from that network connection. Security needs to be baked in from the get-go as opposed to it being an afterthought, and as a lot of attacks have shown, a complex system is only as strong as the weakest link.

From a software perspective, the system must be architected to provide guarantees that a system cannot be reconfigured after boot and that no application can accidentally (or maliciously) cause the robot to fail.

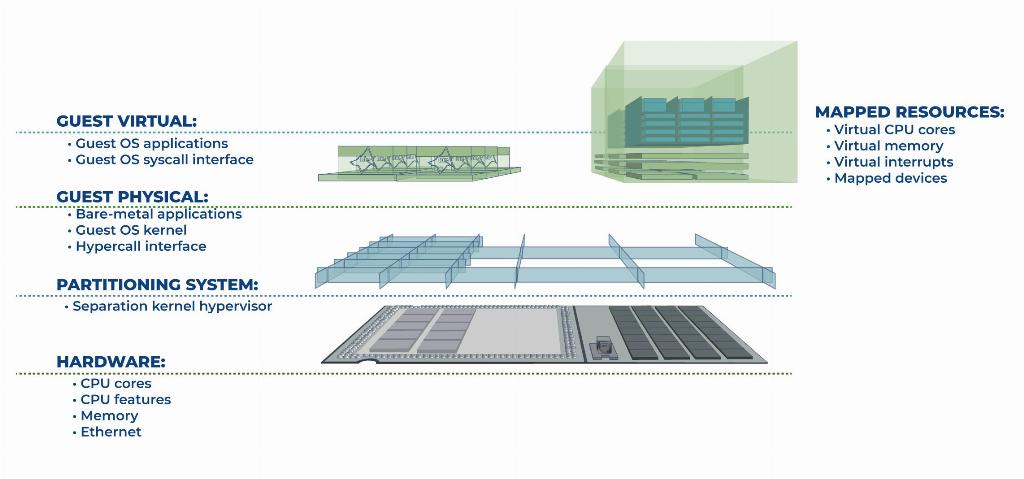

This challenge has to be addressed in the context of delivering these systems within a cost, power and footprint envelope that makes them commercially attractive. This implies shrinking multiple systems onto a single consolidated board (and in an increasing set of cases, a single heterogenous multicore chip).

These systems need to run rich operating systems like Linux and Windows while also guaranteeing the real-time behaviour of it-simply-must-always-respond-this-way elements of the platform.

These systems are referred to as mixed criticality systems. Applications must be compartmentalized to ensure that certain applications cannot cause other elements of the system to fail.

The pandemic is accelerating the drive towards cobots, providing more acceptance toward increased digital transformation and automation. Environments where maintaining six feet of separation between humans is challenging or incredibly inefficient will start to deploy this technology.

This is where Lynx focuses, providing a secure hypervisor that can securely partition and isolate applications, while guaranteeing that there is microsecond-level response to time critical events.

Lynx Software Technologies www.lynx.com